February 1, 2026 · Kuba Rogut

Put simply, procedural audio is the art of creating sound effects from scratch using algorithms, right when you need them. Instead of playing back a pre-recorded file, you're essentially giving the computer a recipe to "cook up" a sound on the fly. This is the secret sauce for creating audio that feels alive and responsive in games and interactive media.

Think about a video game where every footstep, bullet ricochet, and gust of wind sounds just a little bit different, reacting perfectly to the world around you. That’s not done by packing the game with thousands of individual audio files. That’s procedural audio at work. It bypasses static, pre-recorded assets in favor of mathematical models and rules that synthesize sound in real time.

It's a bit like being a master chef. A traditional sound designer is reheating a pre-made meal—they play a static .wav file. A procedural sound designer, on the other hand, uses a recipe (the algorithm) and fresh ingredients (like noise generators and basic waveforms) to create a unique dish every single time. This means the sound can adapt instantly. A footstep on grass sounds different from one on gravel because the underlying "recipe" is tweaked by the game's data on the fly.

The payoff for creating sound with algorithms goes way beyond just adding a bit of variety. This approach tackles some of the biggest headaches in modern media production. The numbers speak for themselves: by 2023, procedural methods were behind an estimated 72% of the sound effects in top-grossing mobile games. It’s a clear signal that developers and filmmakers are catching on to how efficient this can be.

Here’s a quick rundown of the main advantages:

Procedural audio is fundamentally about shifting from a static library of sounds to a dynamic system that generates sound. It's the difference between looking at a photograph and stepping into a living, breathing world.

In the past, getting procedural audio into a project meant you needed a deep understanding of digital signal processing and some serious coding skills. Thankfully, things have changed. Modern tools are finally making this powerful tech accessible to everyone. Platforms like SFX Engine, for instance, use AI to turn simple text prompts—like "heavy metal door scraping on a concrete floor"—into a fully-formed procedural sound effect.

This bridges the gap between a highly technical process and an intuitive, creative workflow. It gives video editors, podcasters, and developers the power to craft custom audio without needing a degree in audio engineering. It’s a huge step forward in making dynamic sound a practical tool for any project. For a closer look at the core principles, check out our guide on what sound design is and how it all comes together.

To really see the difference, it helps to put the two approaches side-by-side.

| Attribute | Procedural Audio | Traditional Sample-Based Audio |

|---|---|---|

| Source | Generated in real-time by algorithms | Pre-recorded audio files (.wav, .mp3) |

| Variation | Infinite; every sound can be unique | Limited to the number of recorded variations |

| Flexibility | Highly adaptable; parameters can change dynamically | Static; playback is fixed |

| File Size | Very small (code and parameters) | Large (high-quality audio files) |

| CPU Usage | Higher; requires real-time processing | Lower; primarily disk I/O and decoding |

| Workflow | Designing a system or "recipe" for sound | Recording, editing, and managing audio assets |

Ultimately, neither approach is "better" in every situation. Traditional samples are perfect for iconic, unchanging sounds, while procedural audio excels where dynamic, adaptive sound is needed to create a truly immersive experience.

To really get what procedural audio is all about, you have to peek under the hood at the engines that conjure these sounds out of pure code. These are the different methods of sound synthesis—the fundamental recipes for turning mathematical rules into rich, textured audio. Think of them as different schools of art for a sound designer. Each one starts with a raw idea and shapes it into something real.

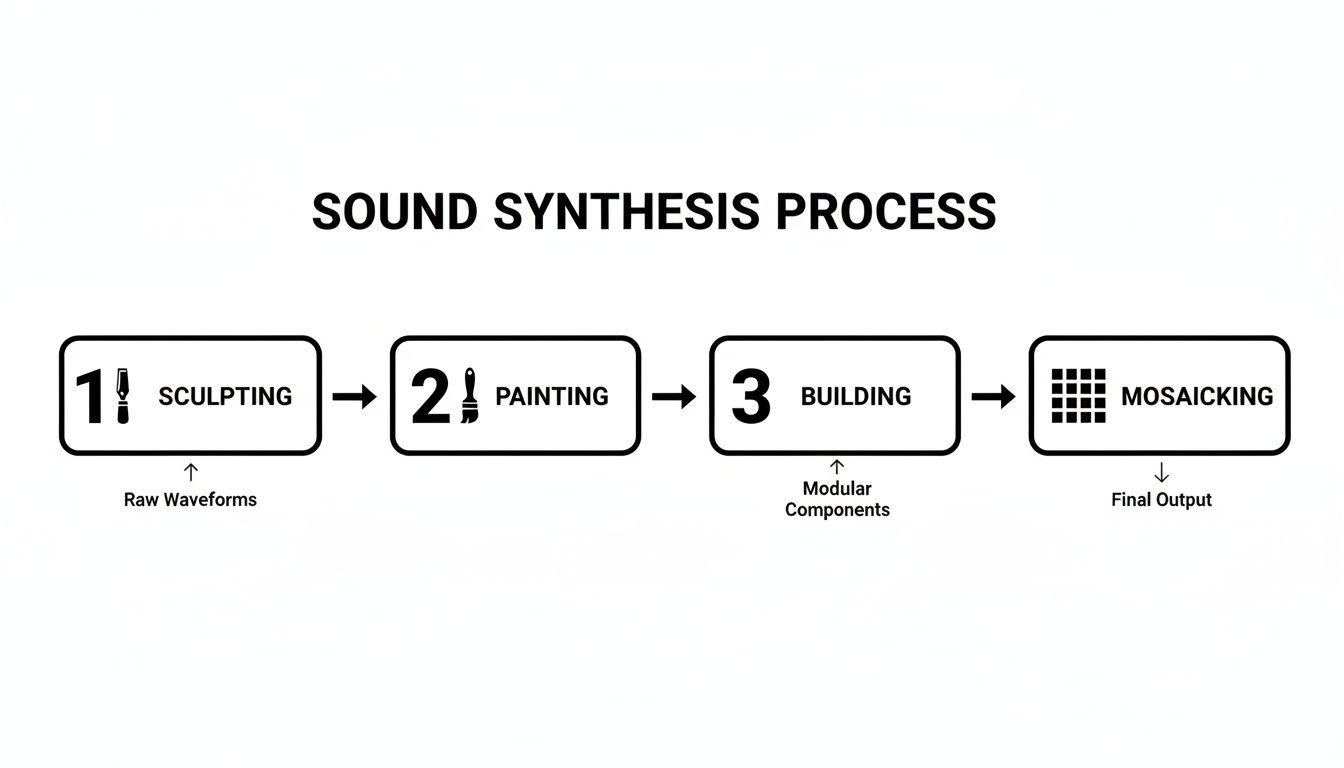

Instead of getting bogged down in the heavy math, the best way to grasp these concepts is through some simple analogies. We can imagine the process as sculpting, painting, building, or even creating a mosaic. Each technique takes a basic material and transforms it into something expressive and recognizable. Let's dig into the four main methods that power most of the procedural audio you hear today.

Picture a sculptor with a massive block of marble. The final statue is already in there somewhere; the artist's job is to chip away all the extra stone to reveal the form hiding inside. That’s a pretty good way to think about subtractive synthesis.

It all starts with a harmonically rich, complex waveform—that’s our block of marble. This is usually a basic shape like a sawtooth or square wave, which is packed with a wide range of frequencies. From there, a filter is used to "carve away" or subtract the frequencies you don't want, shaping the sound's character.

This approach is fantastic for creating things like warm synthesizer pads, deep rumbling bass tones, and even realistic wind effects. You start with a full, noisy texture and simply refine it down to what you need.

Alright, let's switch from sculpting to painting. An artist using additive synthesis is like a painter who begins with a totally blank canvas. They add layers of color, one brushstroke at a time, to build up a complete picture. Instead of starting with something complex and taking bits away, this technique starts from complete silence and adds simple sound waves together.

The foundational element here is the sine wave, the purest tone imaginable, containing just a single frequency. By layering dozens, or even hundreds, of these sine waves at different frequencies, amplitudes, and phases, you can construct just about any sound you can imagine. It’s a meticulous process, for sure, but it gives you an incredible amount of control over the final sonic texture.

Additive synthesis is all about construction, not reduction. By carefully combining simple, pure tones, a sound designer can "paint" an incredibly detailed auditory picture, from the shimmering overtones of a bell to the complex, layered roar of a futuristic engine.

So what if, instead of just sculpting or painting the sound, you could actually build the virtual object that makes the sound? That's the whole idea behind physical modelling synthesis. This technique doesn't just mimic a sound; it simulates the actual physical properties of an object and the forces that cause it to make noise.

Think about trying to build a virtual guitar inside a computer. To do it, you'd have to define:

The algorithm then calculates, in real-time, how all these different elements would interact with each other to produce a sound. This gives you an unbelievable level of realism and, more importantly, interactivity. If you change the "wood" of the virtual guitar body, the tone changes. If you pluck the "string" harder, the sound gets brighter and louder, just like it would in the real world. This is the magic behind creating truly dynamic sounds like footsteps on different surfaces, the clatter of falling objects, or the creak of an old wooden door.

Our final technique is a bit like creating a beautiful mosaic out of tiny shards of colored glass. Granular synthesis works by taking a pre-existing sound sample and chopping it up into minuscule fragments called "grains," which are often just a few milliseconds long. These grains can then be completely re-imagined—rearranged, stretched, layered, pitched, and played back in countless new ways.

Let's say you have a single, clean recording of a water drop. By dicing it up into tiny grains, you can create entirely new sonic worlds:

This technique is incredibly powerful for transforming one simple sound into a complex, evolving soundscape. It's a go-to tool in procedural audio for creating ambient textures like rain, crowd murmurs, or otherworldly atmospheres, all from a tiny initial sound file. Each of these methods offers a unique pathway to the same destination: creating dynamic, believable, and endlessly variable sound from the ground up.

The theory behind procedural audio is fascinating, but things really click when you see how it works in a real production pipeline. This is where the algorithms and artistry collide, turning a set of rules into living, breathing sound. It’s a total shift from the old way of just dragging and dropping audio files; instead, you’re building an intelligent system that creates sound as it’s needed.

Let's take a common but surprisingly complex sound to illustrate this: footsteps in a video game. In a traditional workflow, a sound designer might record hundreds of individual footstep samples. You'd need clips for every surface—wood, gravel, mud, metal—and for every speed, like walking, jogging, or sprinting. Before you know it, you have a gigantic library of static files to manage.

In a typical game studio, a procedural setup connects the game engine's data directly to a sound synthesis model. This is usually managed through specialized game audio middleware like Wwise or FMOD. The workflow essentially builds a bridge between the game's virtual world and the sounds it makes.

Here's how it usually breaks down:

Identify Game Parameters: First, the developer figures out which in-game variables should influence the sound. For our footstep example, that would be things like surface_type (wood, gravel, etc.), player_speed, and maybe even player_weight.

Design the Audio Model: Next, the sound designer gets to work building a procedural audio "recipe." They might use a physical modeling synth to simulate the thud and scrape of a foot hitting the ground and a granular synth to generate the crunchy texture of the surface material.

Map Parameters to the Model: This is where the magic happens. The surface_type variable from the game engine might be linked to the texture in the granular synthesizer. Meanwhile, player_speed could control the intensity and timing of the footstep impact in the physical model. For anyone looking to get their hands dirty with this, understanding how to add audio in Unreal Engine is a great starting point for connecting game logic to sound.

This approach gives designers incredible, fine-grained control, but it's not for the faint of heart. It requires serious technical expertise and a whole lot of time to build and tune these intricate systems from scratch.

While the middleware approach is still powerful, a new wave of tools is democratizing procedural audio. AI-powered platforms are changing the game entirely, swapping out complex, node-based editors for simple text prompts. It’s a massive leap from building systems by hand to having them created for you automatically.

Instead of meticulously building that footstep model piece by piece, a creator using a tool like SFX Engine can just describe what they want.

By typing a prompt like 'heavy armored footsteps walking slowly on wet gravel inside a cave,' the AI does the heavy lifting. It interprets the request and generates a procedural model behind the scenes, synthesizing the distinct sounds of clanking metal, splashing water, and crunching stone, complete with the cavern's natural reverb.

Suddenly, the steep learning curve is gone. This empowers video editors, podcasters, and solo developers to generate high-quality, dynamic audio without needing a degree in sound synthesis. The AI handles all the complex modeling, letting the creator focus purely on the creative vision.

This flowchart shows the core concepts of sound synthesis that power both traditional and AI-driven procedural audio.

Every step in that process—from shaping raw waveforms to piecing together textures—is a fundamental technique that AI can now automate. The result is a workflow that delivers incredibly complex sounds from simple instructions, saving a massive amount of time and opening up creative avenues that used to be out of reach for most people.

It’s one thing to talk about the theory, but seeing procedural audio in action is where it really clicks. The biggest impact is in industries where dynamic, responsive sound is a fundamental part of the experience. Gaming, film, and virtual reality have all become incredible testing grounds for how real-time sound synthesis can build deeper, more believable worlds.

These aren't just about adding a bit of variety. We're talking about algorithms crafting entire soundscapes that would be simply impossible to build with static, pre-recorded clips. From sprawling alien planets to busy city streets, procedural techniques are what make these digital environments feel truly alive.

Video games are probably the clearest and most widespread example of procedural audio at work. In modern game design, the world has to react to everything the player does, and procedural audio is what makes that happen sonically. It ensures the soundscape is just as dynamic as the gameplay.

A classic case study is the space exploration game No Man's Sky. With quintillions of algorithmically generated planets, there was no way the team could create unique audio assets for every single world. Instead, they built a procedural system that generates sounds for alien creatures, weather, and environments on the fly. The result is that every player's auditory experience is as unique as the planets they land on.

This isn't a new concept, though. The 1997 release of Unreal Tournament was a huge step forward, with over 50% of its immersive soundscapes generated in real-time. By using algorithms to adapt sounds like weapon fire based on distance and environment, the developers cut their asset storage needs by a massive 70% compared to using traditional samples. You can explore the timeline of powerful music technologies to see how these early innovations really set the stage for today's complex systems.

Film might be a linear medium, but that doesn’t mean procedural audio can’t offer huge creative and efficiency wins during post-production. Sound designers often have to build complex, evolving ambiences—think of a chaotic city street or a dense, living jungle.

Instead of layering dozens of static audio tracks, a procedural model can generate the entire soundscape. A designer can then "direct" the audio in real time, adjusting parameters like traffic density, the time of day, or the intensity of rainfall to perfectly match the on-screen action.

This gives filmmakers incredible control. If a scene's mood shifts, the soundscape can be tweaked instantly without the headache of finding and re-editing a bunch of new sound files. It transforms ambient sound design from an asset-management chore into a creative performance.

In virtual and augmented reality (VR/AR), procedural audio goes from being helpful to being absolutely critical for immersion. VR's entire magic trick is convincing your brain that a digital world is real, and the audio has to react instantly and perfectly to your every move.

Think about these key moments in a VR experience:

Trying to cover every possible interaction with a library of pre-recorded files would be a nightmare. Procedural audio solves this by generating these sounds in the moment, creating a seamless and reactive auditory experience that is fundamental to feeling present and believing the world around you.

Diving into procedural audio can feel like a lot to take in, especially with all the different software options out there. The key is to remember that the "best" tool really just depends on what you're trying to build, how comfortable you are with technical details, and your specific creative vision.

Generally, the landscape splits into three main camps. Think of them as different paths to the same destination, each offering a unique balance of control, complexity, and sheer speed. Let's dig into what they are so you can figure out which one fits your project.

When you need sound to react to every little thing happening in a game, nothing beats dedicated game audio middleware. Tools like Wwise and FMOD are the industry titans here. They act as a sophisticated bridge connecting a game engine (like Unity or Unreal) to the sound playback system.

These platforms give you a visual environment to build intricate sound systems without having to be a programmer. You can link audio parameters directly to in-game data—think connecting a vehicle's engine pitch to its actual speed, or the sound of a footstep to the surface material the character is walking on. The control is immense, but so is the learning curve. Mastering these tools is a full-time job, making them a perfect fit for technical sound designers on big, interactive projects.

For a more detailed breakdown, you can check out our game audio middleware comparison.

If you’ve ever produced music, you’re probably already familiar with a Digital Audio Workstation (DAW). Software like Ableton Live, Logic Pro, or FL Studio are absolute powerhouses for sound creation, and they are fantastic for procedural audio design work.

Using the built-in synths and a universe of third-party plugins, you can build sounds from scratch using any synthesis method you can imagine. This approach is ideal when you need to generate a huge library of sound variations that don't need to react dynamically inside a game. You can set up your synth, automate a few parameters, and export hundreds of unique laser zaps or monster growls as ready-to-use audio files. It’s a great way to create rich, varied assets without getting tangled up in game engine logic.

The most recent and arguably most accessible way to get into procedural audio is through AI-powered platforms. These tools do the heavy lifting for you, hiding all the complex math and synthesis behind a simple, intuitive interface. Instead of twisting knobs, you just describe what you want.

At SFX Engine, this is exactly what we focus on. Rather than wrestling with a complex synth patch, you can just type a prompt like "sci-fi laser cannon charging up and firing" and get a complete, high-quality sound effect in seconds.

This approach shatters the technical barrier. For teams that need thousands of unique audio assets, our API can automate sound generation at scale, plugging directly into a production workflow. It’s the perfect solution for anyone who needs custom sound effects fast, without needing to become a synthesis expert first. It truly puts procedural audio power into the hands of any creator with an idea.

Choosing the right toolset can make or break your workflow. This table breaks down the main options to help you decide which path is the best fit for your project's scope, team's skill set, and creative ambitions.

| Tool Type | Best For | Key Features | Learning Curve |

|---|---|---|---|

| Game Audio Middleware | Technical sound designers building deeply interactive game audio. | Visual scripting, direct game parameter linking, platform optimization. | High |

| Digital Audio Workstations | Sound designers creating large libraries of sound variations for offline use. | Powerful synthesizers, vast plugin support, familiar music production workflow. | Medium |

| AI-Powered Platforms | Game developers, animators, and creators needing custom SFX quickly and at scale. | Natural language prompts, API integration, rapid iteration and generation. | Low |

Ultimately, whether you choose the deep control of middleware, the creative freedom of a DAW, or the incredible speed of an AI platform, the goal is the same: to create dynamic, living sound that brings your project to life.

The world of procedural audio is getting a massive upgrade from artificial intelligence. We're moving away from rigid, pre-programmed algorithms and stepping into an era where sound systems can actually learn and create with a level of nuance we've never seen before. This isn't just about how sounds are made; it's changing who gets to be a sound designer.

Think of it this way: instead of a sound artist manually programming the physics of a metal crash, an AI model can be trained on thousands of real-world examples. It starts to understand what makes metal sound heavy, thin, or resonant. The result? You can generate incredibly realistic and varied sounds from a simple text prompt, completely rethinking the entire creative process.

This shift is a huge leap forward in both efficiency and accessibility. While powerful, traditional procedural audio systems demanded a ton of technical know-how to build and tweak. AI-driven platforms completely flip that around, putting the creative vision back in the driver's seat.

The real game-changer is this: AI handles the complex, nitty-gritty synthesis modeling. This frees you up to think like a director, not an engineer. Your job is no longer to fiddle with algorithms but to describe a sonic vision in plain English and let the AI bring it to life.

Tools like SFX Engine are leading this charge. By building sophisticated AI into their core, they're making procedural audio a practical reality for everyone, from indie game developers to professional video editors. The power to just type a prompt and get a custom, ready-to-use sound effect saves an incredible amount of time and opens up creative possibilities that used to be locked away behind a wall of code.

Looking down the road, the combination of AI and procedural audio is paving the way for truly adaptive soundscapes. Imagine in-game audio that doesn't just react to what you do, but also responds to the emotional tone of the story. An AI could subtly change the ambient sound—making the wind howl a little louder or adding a tense undertone to the music—to amplify feelings of suspense or relief in real time.

This isn't just about making sound effects faster. It's about building deeper, more emotionally engaging experiences. As sound design continues to merge with artificial intelligence, partnering with skilled AI development services will become essential for any project looking to break new ground. The future of sound isn't just procedural—it's perceptive, intelligent, and woven directly into the fabric of the story.

Diving into procedural audio can feel a bit like stepping into a new world, especially if you're used to traditional sound design. It's only natural to have questions. Let's clear up some of the most common ones we hear from creators.

That's a bit like asking if a paintbrush is better than a camera. They're different tools for different jobs, and neither one is universally "better."

Recorded samples are your go-to for high-fidelity, specific sounds. Think of a hero's signature sword clash or the satisfying click of a specific UI button. When you need a sound to be exactly the same every time with maximum realism, samples are unbeatable.

Procedural audio, on the other hand, is the master of dynamic, ever-changing soundscapes. Its tiny footprint and ability to react in real-time make it essential for creating immersive worlds where no two footsteps or gusts of wind should sound identical. Repetition kills immersion, and procedural audio is the cure.

In professional workflows, the magic often happens when you blend both. Use high-quality samples for the hero moments and let procedural generation handle the living, breathing textures of the world around them.

Not anymore. It’s true that procedural audio used to be the exclusive domain of programmers and highly technical sound designers. You had to be comfortable with code to make anything happen.

Tools like Wwise and FMOD started opening the doors with visual scripting, which was a huge step forward. But today, the game has completely changed. AI-powered platforms have torn down the final barriers.

With a service like SFX Engine, you don't need to know anything about the underlying tech. You just describe the sound you imagine using plain text. This puts the power directly into the hands of artists, editors, and designers, letting them focus entirely on the creative vision, not the code behind it.

It’s incredibly versatile, but it definitely has its sweet spots. It excels at creating things that are naturally chaotic or defined by their constant change.

Where does it still have room to grow? Recreating the subtle, emotional nuance of highly complex organic sounds—like the human voice or a specific Stradivarius violin—is still a massive challenge. That said, the latest AI synthesis models are getting eerily good at learning the acoustic fingerprints of these sounds, so that gap is closing faster than you might think.

Ready to stop searching for the perfect sound effect and start creating it? With SFX Engine, you can generate custom, royalty-free audio in seconds using simple text prompts. Try it for free and bring your sonic vision to life.